Page 1 of 1

Swimming with Alphago

Posted: Fri Mar 17, 2017 7:07 am

by djhbrown

“You know, it doesn't work if you just try to copy Alphago; you have to understand it” - Jennie Shin 2p

Alphago often makes moves that pro commentators call strange, which in light of her successes may not be as daft as they seem.

An analysis of one such “strange” move in a recent game with world #1 Ke Jie, made through the lens of a commonsense Go algorithm, reveals that it's not so strange after all.

Full paper PDF (26 pages) best viewed in Dual Mode, Odd Pages Left

download from:

https://papers.ssrn.com/sol3/papers.cfm ... id=2934932

Re: Swimming with Alphago

Posted: Fri Mar 17, 2017 9:37 am

by sorin

djhbrown wrote:“You know, it doesn't work if you just try to copy Alphago; you have to understand it” - Jennie Shin 2p

Alphago often makes moves that pro commentators call strange, which in light of her successes may not be as daft as they seem.

An analysis of one such “strange” move in a recent game with world #1 Ke Jie, made through the lens of a commonsense Go algorithm, reveals that it's not so strange after all.

Full paper PDF (26 pages) best viewed in Dual Mode, Odd Pages Left

download from:

https://papers.ssrn.com/sol3/papers.cfm ... id=2934932

Does your method work when applied *before* playing a move, rather that explaining why a move was great *after* human commentators agree on that?

Re: Swimming with Alphago

Posted: Fri Mar 17, 2017 10:57 am

by Uberdude

Btw, her name is Jennie Shen.

Re: Swimming with Alphago

Posted: Fri Mar 17, 2017 2:09 pm

by Uberdude

sorin wrote:Does your method work when applied *before* playing a move, rather that explaining why a move was great *after* human commentators agree on that?

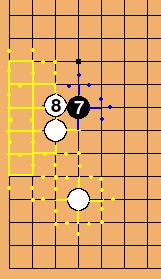

As a test case, I offer the follow move. Is Swim similarly enthusiastic?

$$B

$$ +---------------------------------------+

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . X . . . . . . . . . . . . . . . |

$$ | . . . , . . . . . , . . . . . X . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . X . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . , . . . . . , . . . . . , . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . 1 . . . . . . . . . . . . . . . |

$$ | . . O . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . O . . . . . , . . . . . O . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ +---------------------------------------+

- Click Here To Show Diagram Code

[go]$$B

$$ +---------------------------------------+

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . X . . . . . . . . . . . . . . . |

$$ | . . . , . . . . . , . . . . . X . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . X . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . , . . . . . , . . . . . , . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . 1 . . . . . . . . . . . . . . . |

$$ | . . O . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . O . . . . . , . . . . . O . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ | . . . . . . . . . . . . . . . . . . . |

$$ +---------------------------------------+[/go]

Re: Swimming with Alphago

Posted: Fri Mar 17, 2017 5:03 pm

by djhbrown

sorin wrote:Does your method work when applied *before* playing a move?

Yes. If you read the full paper, you will see that Swim examines the position before Alphago's "strange" move 7 and comes up with two justifications for making it a candidate. That does not necessarily imply that Swim would choose that move itself, as there are other factors it considers, most notably the balance of perceived territory/influence. Offhand, i can't predict what move Swim would choose without doing a complete simulation of the algorithm; my guess is that it might favour a keima kakari in the lower right, but i can't be sure.

Uberdude wrote:Jennie Shen....

correction applied, thank you.

As regards your alternative position, where white has an ogeima shimari instead of keima, Swim's justification for black 7 (= your black 1) would be pretty much the same. However, other factors might make it less favoured; a keima is perceived by Swim to create a cluster, but an ogeima is not. Inducing a white push along the 3rd line would strengthen white's colour map to the edge, which would propagate a shadow towards the hoshi stone.

Extract from

https://papers.ssrn.com/sol3/papers.cfm ... id=2818149 :

Code: Select all

Colour connection is computed by an iterative colour propagation algorithm.

step 1: a colour-controlled point colours its links and their endpoints.

step 2: a link connecting two singly-coloured points or a singly-coloured point on the second line to a neutral edge point is coloured.

The steps are repeated until no new coloured points or links are discovered.

Each singly-coloured point shadows its links to empty points. Then:

step 1: a point whose links are multiply shadowed by only one colour becomes a shadowed point.

step 2: a shadowed point propagates its shadow along its unshadowed links.

The steps are repeated until no new multiply shadowed points are discovered.

That wouldn't turn the three white stones into a single cluster, but it would strengthen the white shadow over the ogeima's weak point.

- ogeima3.jpeg (11.86 KiB) Viewed 8763 times

Re: Swimming with Alphago

Posted: Sat Mar 18, 2017 4:23 am

by luigi

djhbrown, after reading some of your posts, I must address the elephant in the room: Can your system actually play Go? Does it exist as software as opposed to a mere logical construct?

Re: Swimming with Alphago

Posted: Sat Mar 18, 2017 4:53 am

by John Fairbairn

If the programming strategy is to establish candidate moves and then choose one of these on the basis of further criteria such as playouts, this can be done with high efficiency simply by selecting as candidates moves those which are one intersection away from the last move played. If you refine this by establishing a region in which the last N moves have been played, and choosing candidates from within this region, you can achieve extreme efficiency at minimal computational cost.

As impressive as this Candicate Refinement According to Proximity system is, the result will not be good go. For maybe 90% or even more of the game you could replicate the pro's moves, but the problem comes with the breakout moves, or the tenukis. As I see it, that's where AlphaGo stood out, and that's the aspect that pros seem to have latched onto. I don't recall any comment where the pros thought AlphaGo was tactically stronger, at least within a range of moves that we'd usually consider tactical.

In making those breakout moves, I'd be a bit surprised if AlphaGo was relying on things like influence functions. It surely needs a more discriminating way of comparing remote areas. And maybe that discrimination can never be explained in our terms, simply because it depends on very long playouts?

Re: Swimming with Alphago

Posted: Sat Mar 18, 2017 6:02 am

by Bill Spight

John Fairbairn wrote:

As impressive as this Candidate Refinement According to Proximity system is, the result will not be good go. For maybe 90% or even more of the game you could replicate the pro's moves, but the problem comes with the breakout moves, or the tenukis. As I see it, that's where AlphaGo stood out, and that's the aspect that pros seem to have latched onto. I don't recall any comment where the pros thought AlphaGo was tactically stronger, at least within a range of moves that we'd usually consider tactical.

In making those breakout moves, I'd be a bit surprised if AlphaGo was relying on things like influence functions. It surely needs a more discriminating way of comparing remote areas. And maybe that discrimination can never be explained in our terms, simply because it depends on very long playouts?

Influence functions proved to be a dead end, at least for now. Zobrist introduced the first one almost 50 years ago. Researchers did not even approach agreement on which function was best. About the only agreement was that influence reduces with distance. And Monte Carlo playouts were not a breakthrough. Early Monte Carlo systems did not produce good go, either. A major breakthrough did come years later with the idea of Monte Carlo Tree Search (MCTS) over a decade ago. That enabled Monte Carlo systems to work well. AlphaGo combines MCTS with neural networks and deep learning. The initial training of the neural networks was based on what? Human play. That is one reason that I think that pros will not find it too difficult, given hundreds of games by AlphaGo and its successors, to explain their play in human terms. I find it interesting that one trademark of AlphaGo's style, the early shoulder blow, was prefigured by Go Seigen in his 21st century go writings. Another reason is human intelligence and creativity. Computers have been better than MDs at initial diagnosis for decades. In research where MDs used computer generated protocols for initial diagnosis, after a while the MDs got better than the computer protocols. I think that our current crop of computer programs, even if they are unable to explain their play in human terms, will help to produce better go players, at both the amateur and professional level.

Re: Swimming with Alphago

Posted: Sat Mar 18, 2017 7:26 am

by djhbrown

Nice one

Re: Swimming with Alphago

Posted: Sat Mar 18, 2017 3:40 pm

by sorin

John Fairbairn wrote:I don't recall any comment where the pros thought AlphaGo was tactically stronger, at least within a range of moves that we'd usually consider tactical.

I remember lots of places where pros were amazed by how quickly AlphaGo (especially the Master version) "knocks-out" top-pros in close tactical combats. Basically from each close fight, Master seems to come out on top.